I also tried importing from the abortive Excel sheet I made. In fact, it's been forced to all uppercase. I don't think any value is over 4000 characters (the longest I've located is 2027) and I'm pretty sure the character set is pure ascii-7. But as best I have been able to measure it, there's nothing in it which should have failed. The good part is that it does identify a column name where it failed, namely the long text column. But it ignores this setting - it always fails no matter how you set the tolerance, per column or globally. And thankfully, it has a setting that allows you to tell it per column whether to fail on conversion errors or ignore them. With the import wizard, on the other hand, it doesn't guess column types at all, and I have to manually set the ones I know. But when I run the import, it says that some column would be truncated or otherwise fail to convert, and it refuses to say which column is the one having trouble.

A few others I knew might be longer than the default guessed length of 50 so I bumped up the sizes.

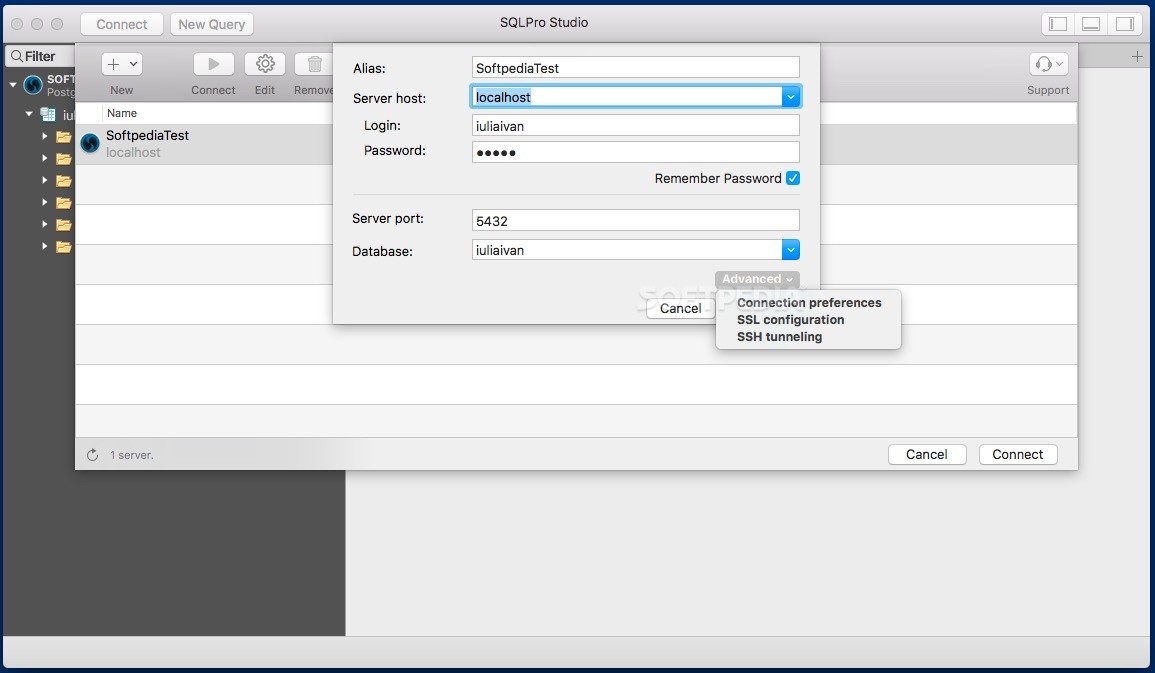

One column is long text, so I've tried setting it to nvarchar(4000) or nvarchar(max) or ntext. With SSMS, it seems to do a very good job of parsing the file and guessing the column types, but it bases the lengths on only the first few hundred rows. I see two import paths available: SqlServer Management Studio's Import Flat File command, and the Import and Export Data wizard. I am trying to import it into Sql Server 2017 Express. Is there anything with my table DDL that would slow down an import? CREATE TABLE `some_schema`.I've got this tab-delimited flat file with fifty columns and two million rows. I have reached out to their support to try and understand what command Sequel Pro is using to load data. Sequel Pro does import the data in 30 seconds, even with the key. the tags table and assumed it must be the key slowing things down. I looked at the DDL for what I had been testing with vs. The reason why I suspected it was the key is that I tried to use the same tags.csv dataset that the other commenter used, but for that table (and for the same DDL that the commenter used) there was no speed difference between DataGrip and Sequel Pro. I tried to import the data without the key, but it still took about 2m40s via DataGrip. 16:03:39 finished - execution time: 2 m 50 s 437 ms, fetching time: 1 ms, total update count: 10000 INSERT INTO import_perf_test_tags_datagrip (user_id, email) VALUES (?, ?) 16:00:49 finished - execution time: 87 ms, fetching time: 106 ms, total result sets count: 1 SELECT t.* FROM import_perf_test_tags_datagrip t 16:00:48 finished - execution time: 171 ms Is it possible to see the exact command that DataGrip is using to load data so that I can cross reference against what my other SQL client is doing?Īgain, this is all I see in the DataGrip logs: I did do some testing on a table without any keys specified and there was no performance discrepancy. I have another MySQL client on my mac (Sequel Pro) that will import a 10,000 row file with two columns (IDs and email addresses) in about 30 seconds, whereas DataGrip takes almost 3 minutes. I am only experiencing performance issues with the import. Server ping isn't very straightforward to measure since I'm connecting via an SSH tunnel.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed